Our Projects - Home-Based Motion Capture

Home-Based Motion Capture System for Assessing Upper-Limb Impairment

Undergraduate

Mocap, AI

2024

This project developed a home-based application for assessing upper-limb impairment using the Azure Kinect motion-capture system. Integrating mixed and augmented reality with gamification, the application offers tailored assessments of range of motion, stability, mobility, and precision, delivering real-time feedback and progress tracking to promote patient engagement during rehabilitation.

Overview

This project aimed to demonstrate the feasibility of a home-based motion-capture application that allows patients to perform regular, autonomous assessments of their upper-limb mobility. By combining the Azure Kinect depth sensor with mixed reality (XR) and augmented reality (AR) environments, the system provides instant feedback and lays the groundwork for a future virtual therapist expert system.

The three core objectives were: (1) measure kinematic arm data using motion capture, (2) assess and provide quantitative feedback on upper-limb mobility, and (3) develop an intuitive, accessible application for physically impaired users.

4

Gamified Assessments

20

One-Take Participants

14

Days Longitudinal Study

125

Sensor Configurations Tested

Methods

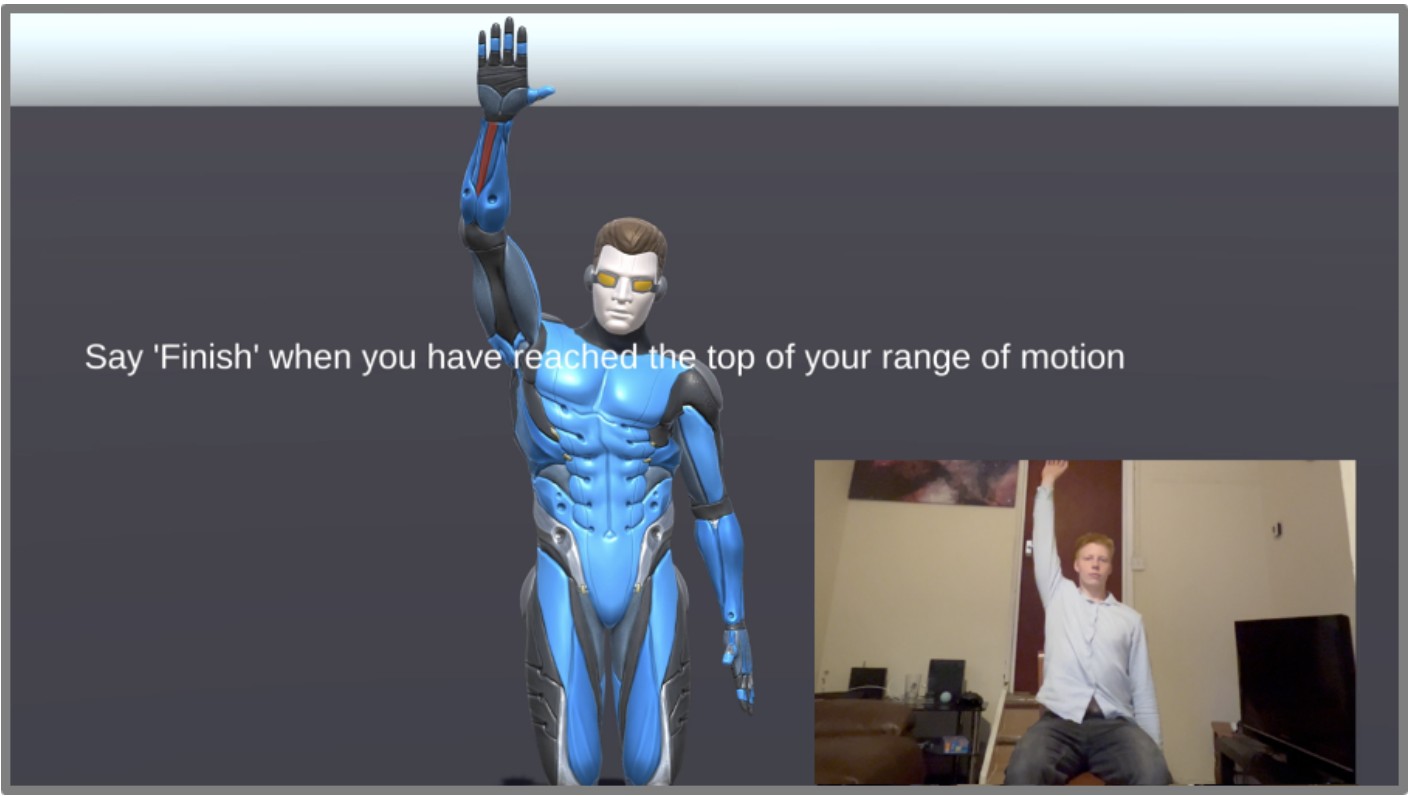

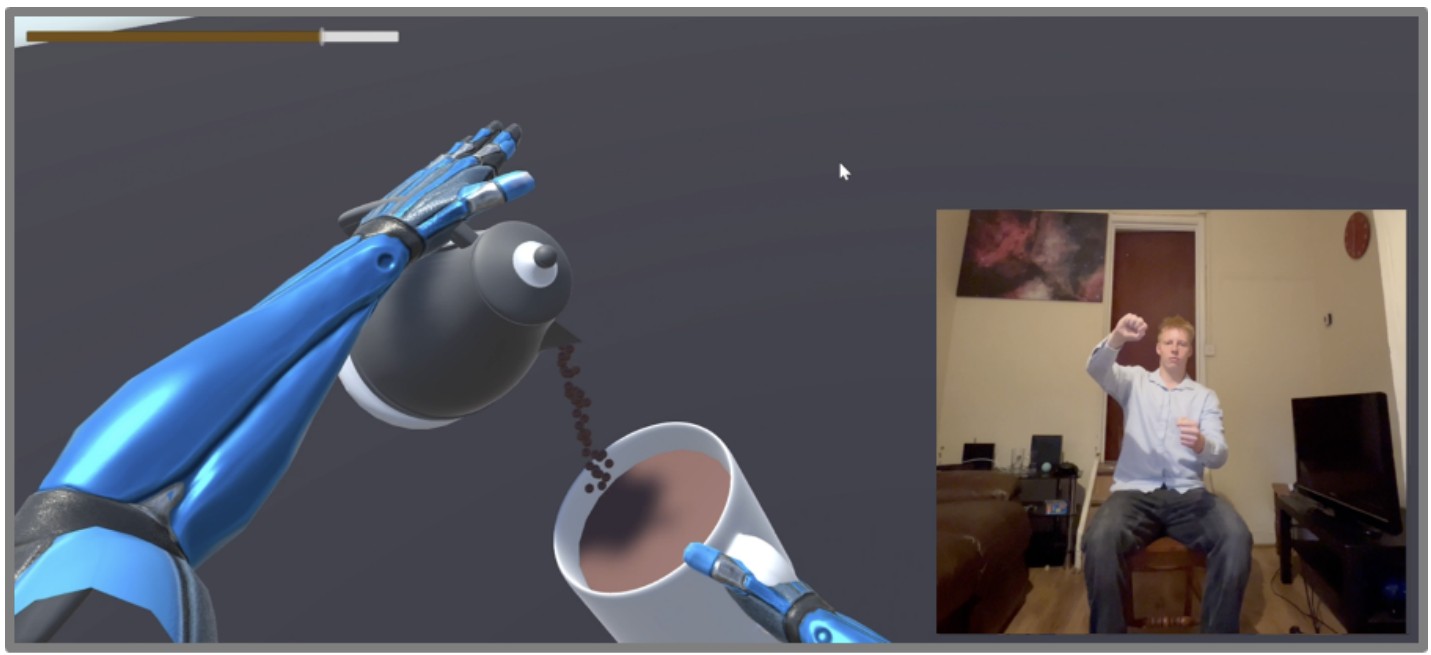

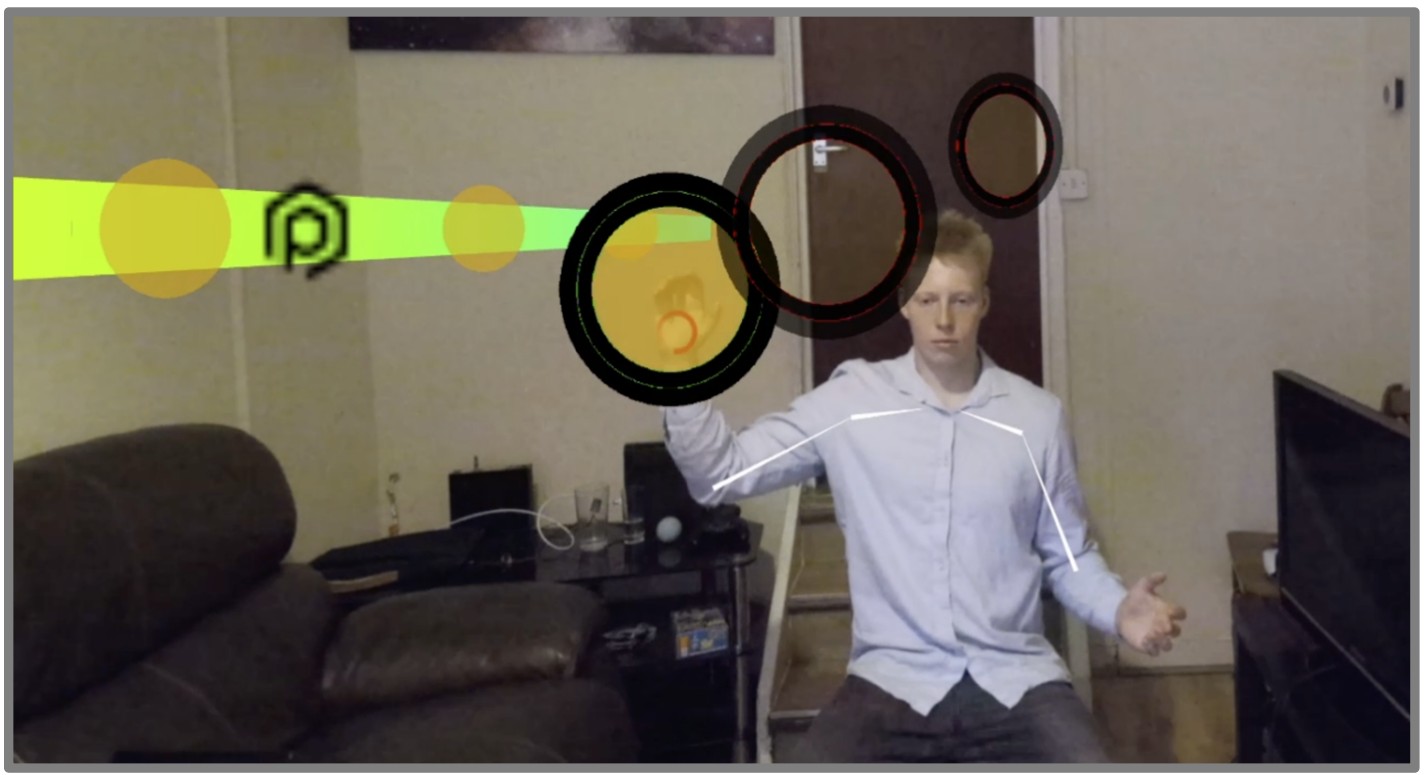

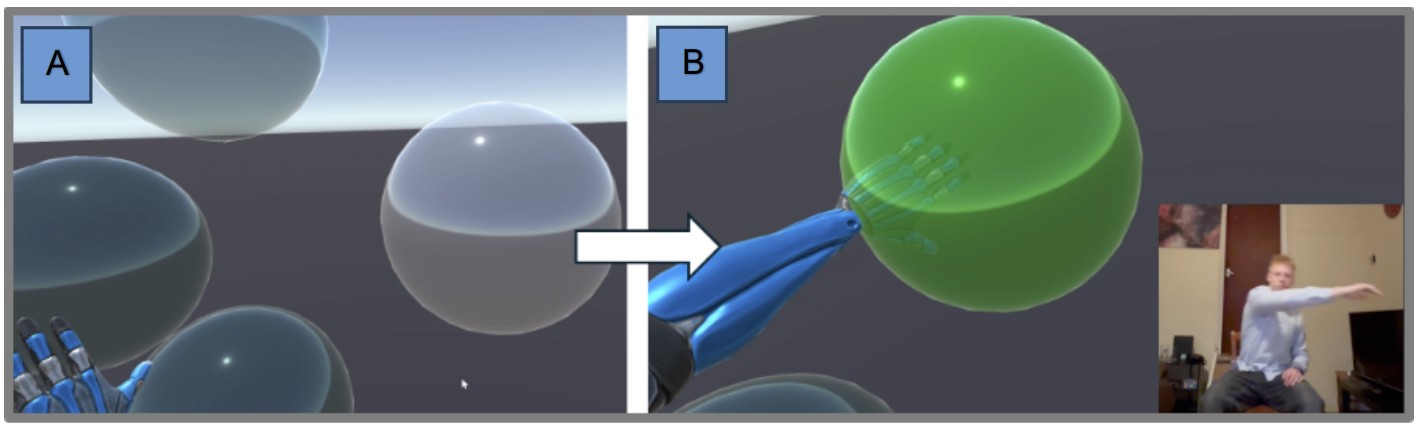

Four Gamified Assessments: The application was built in Unity using the Azure Kinect Body Tracking SDK. Four complementary assessment scenes were designed, each targeting different kinematic variables. Users interacted via voice commands ("pause", "play", "skip") and could complete all assessments from a seated position. A virtual avatar mirrored the user's movements on-screen, simplifying comprehension.

Each assessment produced a compound score out of 100, calibrated against a healthy-user database derived from a one-take survey of 20 participants. Compound scores used clamped, normalised comparisons against values one standard deviation below the healthy mean, scaled over three standard deviations.

Performance Maximisation

An experiment tested 125 sensor configurations (5 heights × 5 angles × 5 distances) to identify optimal body-tracking conditions. Each setup was evaluated by counting erroneous tracking seconds during a standardised 6-second seated routine, repeated twice for reliability.

Results showed the best tracking occurred at 1.4-1.6 m height, tilted -15° to -30°, at a distance of 1.4-1.6 m. A counter-intuitive finding was that a 0° flat orientation performed poorly, likely because raised arms obscured shoulder and elbow joints from the sensor's perspective.

Results

One-Take Survey (n=20) Twenty healthy adults completed a single session. A Shapiro-Wilk test confirmed normal distribution for all parameters except Flow time-taken (p = 0.043), which showed a bimodal pattern, some users immediately understood the mug-grabbing mechanic while others did not.

Two-Week Longitudinal Study (n=3) Three healthy users completed daily assessments over 14 days. The study revealed distinct learning curves for each assessment and identified a 5–6 day adjustment period before scores stabilised, which was subsequently used to calibrate the difficulty ramp-up algorithm.

Key Findings

The project demonstrated that a home-based, non-immersive motion-capture system can deliver reliable and engaging upper-limb assessments. Healthy participants produced homogeneous results across all four assessments, suggesting the system could meaningfully distinguish atypical motion patterns in impaired users. The strategic use of AR/XR over VR reflects a practical approach, with only 1-2% of older UK adults owning VR headsets compared to 58-84% owning laptops.